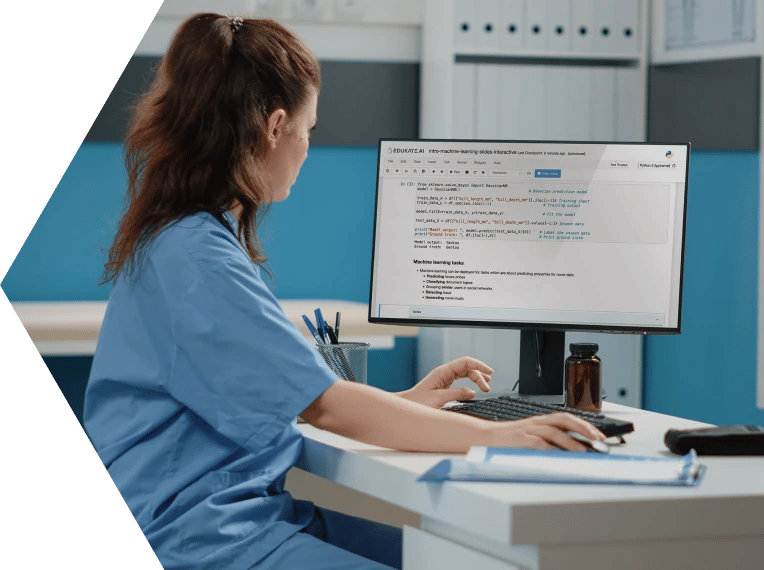

Digital Transformation

Develop professionals that can lead and manage the transformation to adopt new technologies like AI.

Upskill your workforce and accelerate your digital transformation with expert technical programmes designed to create impact

.png)

%20(2).png)

%20(2).png)

.png)

.png)